A practical guide for healthcare enterprises seeking to innovate with AI while protecting patient data and meeting compliance requirements.

The Urgency to Move Quickly and the Price of Getting It Wrong

Healthcare organizations are under pressure to adopt AI, and the pressure is intensifying.

According to McKinsey, 78% of organizations now use AI in at least one business function, up from 55% just two years ago. Healthcare is among the sectors showing highest adoption of agentic AI systems.

The question is no longer whether to deploy AI, but how to do so responsibly?

In a recent Technology Rivers panel discussion, Health AI Agents: What Does It Take to Succeed?, healthcare AI practitioners and compliance experts explored exactly this challenge and how to innovate safely in environments where patient data, regulatory exposure, and clinical outcomes are always at stake.

Yet the risks are real and growing. Gartner projects that over 40% of AI-related data breaches by 2027 will stem from improper or unapproved generative AI use. Nearly half of organizations using GenAI have already experienced problems ranging from made-up answers to data privacy and security breaches.

In healthcare, where HIPAA violations carry significant penalties and patient trust is foundational, these risks are not abstract.

The good news is that safe innovation is achievable through a set of architectural techniques known as retrieval-augmented generation (RAG), sandboxing, and anonymization, which allow healthcare organizations to experiment, iterate, and deploy AI without exposing sensitive systems or data. These are not theoretical concepts; they are practical safeguards that enable progress while maintaining control.

Reducing Hallucination & Protecting Data: How RAG Keeps AI Honest

One of the most significant risks with large language models in healthcare is hallucination: confident-sounding outputs that are factually wrong. In a clinical or compliance context, hallucinated information can lead to patient safety issues, regulatory violations, or operational errors that are difficult to trace.

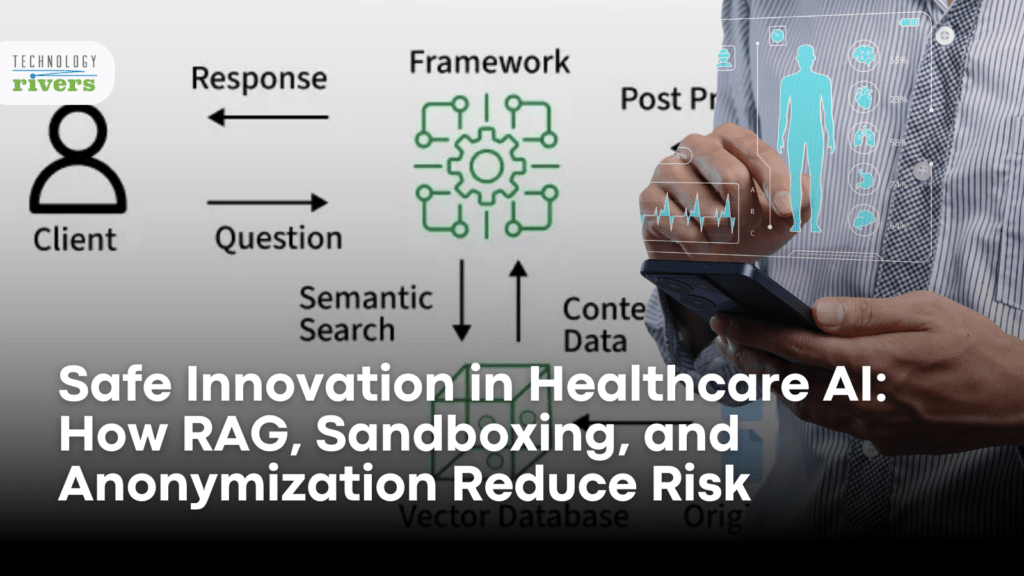

Retrieval-augmented generation addresses this by grounding AI responses in your organization’s actual data rather than relying solely on a model’s training. As Ghazenfer Mansoor, CEO of Technology Rivers, explained during a recent panel discussion on healthcare AI, RAG is the concept where you keep your internal data in your internal database, a vector database. You still use the LLM for certain things, but you filter the results based off your own data. So you are really using the internal data to get the answers from your LLMs.

The architecture matters for compliance as well as accuracy. With RAG in healthcare AI, sensitive data stays within your controlled environment rather than being sent to external models for training.

The LLM queries your internal database, retrieves relevant information, and generates responses grounded in that data without the data leaving your infrastructure. This separation is fundamental for organizations building HIPAA-compliant AI systems.

Ghazenfer elaborated on why this matters: right now, everyone just feeds data into AI and asks it for all the answers. But that data is by default trained by the LLMs. And when you have a lot of data—thousands of documents—there will be a lot of hallucination.

RAG limits what your model sees by giving it only the data it needs at that moment.

The Importance of Sandboxes: Testing Without Impacting Production

AI sandboxing creates isolated environments where teams can develop, test, and iterate on AI systems without touching production data or live clinical workflows.

This separation is critical for healthcare organizations that need to experiment with AI capabilities while maintaining strict controls over what reaches patients and clinicians.

The panel discussion addressed this directly. Ghazenfer described the approach: sandboxing is doing the experiment in your test environment using anonymized data. Even in that case, you never use real data, and even if you are using a real name, those are dummy names. When you combine all of these approaches, that reduces your risk, protects patient data, and allows teams to innovate faster without waiting for the perfect data set.

For organizations building AI and machine learning solutions, sandboxing serves multiple purposes. It allows rapid iteration without compliance review delays, enables testing of edge cases and failure modes before deployment, and provides a controlled environment for training staff on AI tools without risk to live systems.

The operational benefit is significant: teams can move quickly in the sandbox, validate that AI systems perform as intended, and then deploy to production with confidence rather than hope.

Developing Without Exposing Patient Information

Healthcare AI anonymization removes or obscures protected health information from data sets used for development and testing. This allows organizations to work with realistic data structures and patterns without exposing actual patient information to AI models or development teams.

Ghazenfer explained the practical application: you have PHI data for doing development. During testing, you do not want the real data exposed, and even when you are giving it to LLMs, there is no need to give everything.

For example, you can say a male, 60-year-old, has X, Y, Z, and this ethnicity without giving any specific PHI data. You can anonymize that because it allows you to do testing, development, and iteration without exposing your PHI.

This technique is particularly valuable for healthcare applications that need to be trained or tested on clinically representative data. Anonymization preserves the statistical properties and patterns that matter for AI performance while removing the identifiers that create compliance risk.

The Boundary Between AI and Human Expertise

Technical safeguards like RAG, sandboxing, and anonymization reduce risk, but they do not eliminate the need for human judgment in high-stakes decisions. The panel was clear that safe AI innovation requires knowing where humans must remain in the loop.

During the webinar discussion, Archna drew a direct line: the most important is the human in the loop. She would assign the most high-risk, ambiguous input, and complex situations which require empathy and judgment to require human in the loop, while AI handles volume and pattern recognition and humans handle judgment and accountability.

This design principle also addresses a challenge that is often overlooked: organizational fear. Archna noted that safe AI architecture reduces resistance to adoption. When you implement this culture in an organization, you reduce the fear factor.

Everybody is scared of AI taking their job; you have to address it right away and elevate the human to do the work that requires judgment, empathy, and complex decision-making, while you let AI handle the rest.

Safe design is not just a technical decision. It is a change management strategy that positions humans as essential partners rather than obstacles to automation.

McKinsey’s research supports this approach. Organizations that define clear processes to determine how and when AI outputs need human validation are significantly more likely to achieve value from their AI investments. The question is not whether to include humans; it is where in the workflow human review adds the most value.

Keeping Every Decision Trackable

The techniques described above enable safe experimentation. But scaling AI across an enterprise requires something more: a governance infrastructure that provides visibility into what AI systems are doing and why.

This kind of infrastructure helps with understanding and debugging solutions and also supports HIPAA, GDPR, and SOC 2 compliance, as well as FDA expectations, by enabling analysis of cases where the AI model is failing. Without comprehensive logging, organizations cannot investigate incidents, demonstrate compliance to auditors, or identify patterns that indicate system problems.

Megan, a quality and regulatory expert on the panel, framed this as a design requirement rather than an afterthought.

Gartner’s research indicates that 55% of organizations now have an AI board or dedicated oversight committee, a tangible shift from informal sponsorship to formal governance structures. Organizations with dedicated oversight are more likely to integrate risk monitoring, accountability, and continuous review into their operating model.

Building Trust Before Scaling: From Pilot to Production

The techniques outlined here—RAG, sandboxing, anonymization, human-in-the-loop, and governance infrastructure—are not obstacles to innovation. They are the foundation that allows innovation to scale.

Ghazenfer offered practical guidance for implementation, saying the best approach for enterprises, or in fact for anybody, is to start with one workflow and then implement one flow at a time. Once that is done, you move on to the second one.

Safe design enables this incremental approach by ensuring each deployment is controlled, auditable, and reversible.

Megan reinforced the stakes: health AI agents can be most successful when they start off with a very narrow scope, and even if one of those steps fails, it is very difficult—especially in healthcare—to win back that trust because it is earned incrementally.

Technology Rivers approaches this through a structured process: designing compliant AI architectures with auditability built in, using sandboxed environments for development and testing, implementing RAG to reduce hallucination and protect data, and scaling only after validating results.

The sequence matters: design for safety first, test in isolation, control data exposure, then scale responsibly.

The Regulatory Horizon: What Leaders Should Prepare For

The regulatory environment around AI is tightening. California’s AI Transparency Act takes effect in 2026, and federal regulators are drafting frameworks for AI governance in healthcare.

The EU AI Act establishes risk-based requirements that affect any organization operating in European markets. For healthcare enterprises, this means AI risk reduction techniques are becoming compliance requirements, not just best practices.

McKinsey’s research shows that public confidence in AI providers has slipped from 61% in 2019 to 53% in 2024. In healthcare, that skepticism could hinder adoption of AI-powered clinical tools unless organizations demonstrate explainability, fairness, and transparency, and the organizations that invest in trust-building measures—including the safeguards described here—will be better positioned to deploy AI at scale.

As agentic AI systems take on more autonomous decision-making, the need for governance grows proportionally. Gartner predicts that by 2028, 40% of CIOs will demand Guardian Agents to autonomously track, oversee, or contain the results of AI agent actions. The organizations building governance infrastructure now will be ready for that future.

Reconciling Speed and Safety

Safe innovation in healthcare AI workflows is not about avoiding risk. It is about managing risk systematically.

- RAG reduces hallucination and keeps sensitive data within your control.

- Sandboxing enables experimentation without production exposure.

- Anonymization allows development and testing without PHI risk.

- Human oversight ensures judgment and accountability where they matter most.

- Governance infrastructure provides the visibility and traceability that compliance and trust require.

The question for enterprise leaders is not whether these safeguards slow innovation. It is whether your organization can afford to innovate without them.

Watch the Full Discussion

This article draws on insights from the webinar Health AI Agents: What Does It Take to Succeed?, featuring healthcare AI practitioners, compliance experts, and enterprise technology leaders. Watch the full recording to explore additional topics, including workflow automation, multi-agent systems, and governance architecture.

Build AI Systems You Can Trust

Every healthcare AI deployment eventually faces scrutiny: a compliance audit, a patient safety review, a board asking how decisions are being made. The organizations that answer confidently are the ones who built safeguards in from the start.

Technology Rivers helps healthcare organizations design AI systems with RAG, sandboxing, and governance infrastructure built into the architecture, not bolted on afterward. If your team is planning an AI initiative and wants to get the foundation right, let’s talk.