Ever wished you could chat with your own documents? Ask questions about company policies, pull facts from reports, or search through your notes using plain English?

That’s exactly what RAG applications do – and with LangChain plus Claude, building one is surprisingly straightforward.

At Technology Rivers, we’ve practiced building intelligent document systems internally to explore how RAG can transform the way teams access and use knowledge. From healthcare providers searching clinical guidelines to enterprises creating intelligent support systems, these applications are reshaping information access across industries.

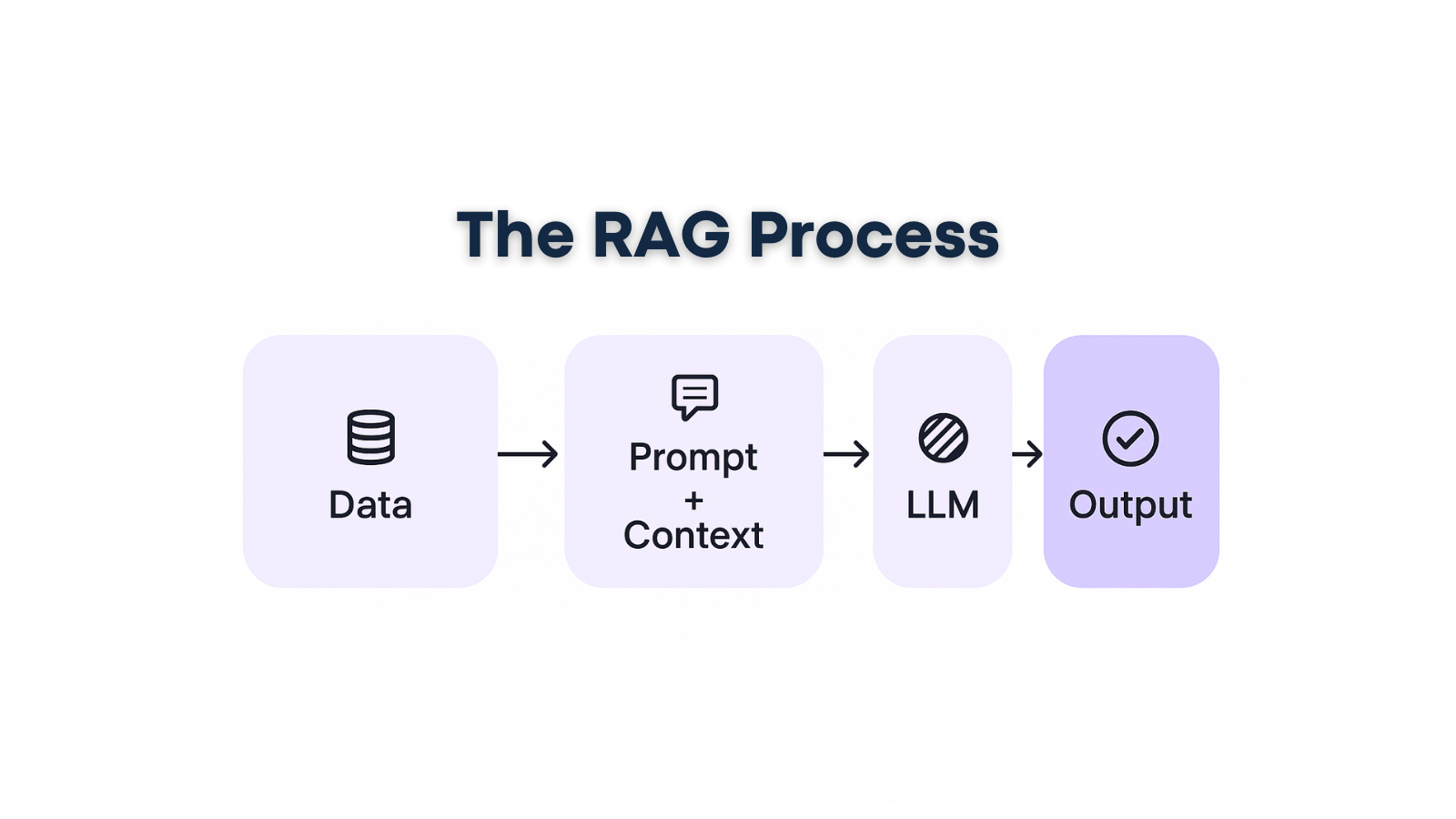

What Is RAG?

RAG = Retrieval-Augmented Generation

Think of it like an open-book exam:

- Retrieve: Look up relevant pages from your documents

- Augment: Give those pages + the question to your AI assistant

- Generate: Get an intelligent answer based on your actual content

Instead of relying on what an AI model learned during training, RAG lets it access your specific documents to provide accurate, source-backed answers. It’s the difference between asking a stranger about your company versus asking someone who just read your entire employee handbook.

Why RAG Applications Matter for Business

Plain language models are trained on general internet data. They don’t automatically know your private files, internal policies, or the latest updates in your organization. RAG bridges this gap by grounding AI responses in your actual content.

Key Benefits:

- Fewer Hallucinations: Answers are based on retrieved text from your documents

- Always Current: Use your latest files and updates automatically

- Domain-Specific: Tailored to your industry, company, or personal knowledge

- Transparent: Show exactly where each answer came from

- Secure: Keep sensitive data within your control

According to recent industry data, RAG implementations reduce AI hallucinations by up to 49% while providing complete source attribution – critical for regulated industries like healthcare and finance.

Real-World RAG Applications

Customer Support: “What’s our return policy for electronics?”

→ Searches policy documents, returns the correct section with a clear summary

Business Intelligence: “What did the Q3 report say about sales growth?”

→ Finds relevant slides and paragraphs, summarizes with citations

Healthcare: “What are the latest treatment protocols for this condition?”

→ Searches clinical guidelines and research, provides evidence-based answers

Personal Knowledge: “Find that budget template I saved last month”

→ Locates the file and highlights the relevant sections

How LangChain Simplifies RAG Development

LangChain provides the essential building blocks for RAG applications without requiring you to build everything from scratch:

Document Processing

Load PDFs, Word documents, and text files. Clean and split long documents into searchable chunks automatically.

Smart Search Capabilities

Create embeddings (numeric representations of text meaning) and store them in vector databases like Chroma, Pinecone, or FAISS. Enable semantic search that finds meaning, not just keyword matches.

Model Integration

Connect seamlessly to AI providers like Anthropic (Claude) and OpenAI. Handle API calls, error handling, and response processing automatically.

Composability & Templates

Use reusable prompts, chains, and guardrails including retries, timeouts, and validation. Focus on your use case instead of technical plumbing.

Building Your First RAG Application: The Blueprint

Step 1: Project Foundation

Set up a backend using Node.js/Express or Python/FastAPI. Install LangChain and your chosen vector database client. Configure environment variables and optionally add Docker for consistent deployment.

Step 2: Document Ingestion

Create file upload endpoints with proper validation. Use LangChain loaders for different file types (PDFs, Word, Markdown). Split documents into chunks and generate embeddings, then store everything in your vector database with metadata.

Step 3: Smart Retrieval System

When users ask questions, embed the query and run similarity search against your document chunks. Return the most relevant content pieces along with their source information.

Step 4: Intelligent Response Generation

Build prompts that include the user’s question, retrieved document snippets with citations, and clear instructions like “answer based on the provided documents; if unsure, say so.” Call Claude to generate contextual responses

Step 5: User Interface

Create a clean interface with an input box for questions, streaming responses for better user experience, and a sources panel showing filenames, page numbers, and links to original documents.

Essential Components You’ll Need

API Access: Anthropic Claude and/or OpenAI keys for the generation component

Vector Database: Choose from Chroma (local development), Pinecone (managed service), or FAISS (local deployment)

Your Content: PDFs, Word documents, text files, or other document formats

Development Environment: Node.js or Python with your preferred package manager

Nice-to-Have Additions:

- Docker for reproducible development environments

- Authentication system for internal applications

- Logging and metrics for performance monitoring

- Evaluation frameworks for answer quality

Production-Ready Best Practices

Start Simple: Begin with a citation-first prototype that clearly shows sources for every answer.

Stay Honest: Configure your system to say “I don’t know” when evidence is missing rather than generating unsupported responses.

Optimize Retrieval: Focus on improving document chunking and search quality over time – better retrieval leads to better answers.

Security First: Implement proper authentication, data encryption, and access controls, especially for sensitive business documents.

Monitor Performance: Track response quality, user satisfaction, and system performance to identify improvement opportunities.

Industry Applications Driving Growth

Healthcare Organizations use RAG for clinical decision support, medical literature search, and patient information systems while maintaining HIPAA compliance.

Enterprise Companies deploy RAG for employee onboarding, policy assistance, technical documentation, and intelligent customer support systems.

Financial Services leverage RAG for regulatory compliance monitoring, risk assessment, and complex policy interpretation with complete audit trails.

The RAG market has exploded from $1.2 billion in 2024 to projected $67 billion by 2034, driven by organizations recognizing the value of accurate, source-attributed AI responses.

Getting Started: Your First RAG Prompt

Ready to begin? Start with this simple prompt when working with Claude or your development team:

“Help me build a RAG application that answers questions from our [document set], shows sources clearly, and says ‘I don’t know’ when evidence is missing from the retrieved content.”

From there, you can expand into authentication, analytics, improved user experience, and production deployment at your own pace.

Why Choose Technology Rivers for RAG Development

Building production-ready RAG applications requires more than just technical knowledge – it demands an understanding of your specific industry requirements, security needs, and scalability challenges.

At Technology Rivers, we specialize in AI-driven development with deep expertise in healthcare, enterprise, and regulated industries. Our team combines RAG technical proficiency with practical implementation experience, ensuring your intelligent document system scales securely with your business needs.

Whether you’re exploring intelligent document search, automated customer support, or enterprise knowledge systems, RAG applications provide the foundation for AI that’s both powerful and trustworthy.

Ready to transform how your organization accesses and uses its knowledge? Let’s discuss your specific requirements and build a RAG solution that delivers real business value while maintaining complete control over your sensitive information.