Blogs » How to Train and Deploy a Simple Machine Learning Model using Amazon SageMaker

Table of Contents

As your business grows, you start accumulating data – lots and lots of data coming in through varied streams. This leads to a tendency to start asking questions of the collected data – the more questions you ask of your data, the more insight you will get. This is how your data yields hidden knowledge that has the potential to transform your business.

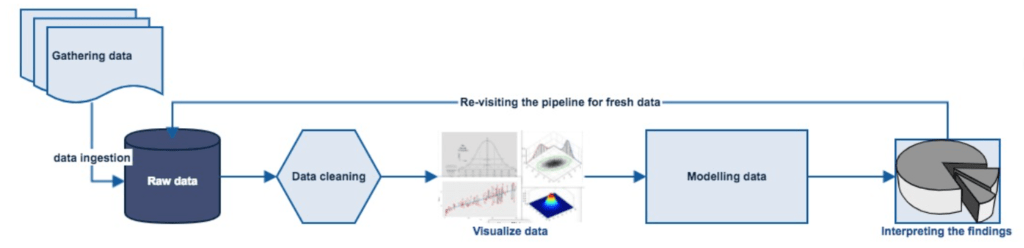

By undergoing a typical data analytics pipeline, as shown below, you can answer a lot of questions for your business, like:

- What should be the sustainable growth goals for the next decade?

- Which areas in customer relationship management require improvements?

- How to optimize the productivity of people and resources?

- What steps should be taken to ensure employee attraction and retention?

- Which metrics should be considered in order to expand the existing business to a new location?

Source: dzone.com

After cleaning your data and finding out what features are the most important, you can use this data to train a machine learning (ML) model that can help enhance your business decision-making through artificial intelligence (AI).

Nowadays, there are innumerable off-the-shelf applications out there that enable ML/AI app development for small to large-scale organizations alike. ML/AI apps help in developing predictive models that can make predictions to guide smart actions with little to no human intervention. In addition to this, they pave the way for reducing the costs and hurdles of AI adoption and digital transformation in organizations.

With cloud and distributed computing technologies revolutionizing the way data is collected and stored, ML and AI are becoming more ubiquitous than ever in mobile devices. Hence, ML has the power to make a mobile app more user-friendly and intelligent. To give an example as to how we at Technology Rivers harness the power of ML and AI into our services, our Sales Training and Coaching Application for Ripcord uses Natural Language Processing (NLP) and speech recognition to instantly convert conversations in live transcripts so that instant feedback can be acquired while a representative is still on call. Similarly, our intelligent Academic Pathway Planning software for enterprises uses predictive analytics to draft for you the most optimal education path, occupations, and thousands of job possibilities while nudging you to stay on track.

As mentioned earlier, there are many ML/AI apps for training a predictive model, like IBM Watson, Google’s Vertex AI, Microsoft’s Azure Machine Learning Studio, and TensorFlow to name a few. Although all of these ML apps come with their own unique features and merits, in this article, we will be taking you through Amazon Web Services’ (AWS) integrated development environment (IDE) for ML: Amazon SageMaker Studio. In this tutorial, you will learn how to train and ultimately deploy a simple ML model using the Amazon SageMaker.

Amazon SageMaker 101

SageMaker is a cloud-based machine-learning platform by Amazon Web Services, to create, train, and deploy machine-learning models in the cloud as well on embedded systems and edge-devices. It helps businesses get from early experimentation to fully scalable production as early as possible without having to worry about spending time on setup.

The SageMaker Basics

Amazon SageMaker includes the following features:

SageMaker Studio

The Amazon SageMaker Studio is an integrated ML environment that lets you build, train, deploy, and evaluate your models, all at the same place.

Projects

SageMaker lets you create end-to-end ML solutions with incremental code changes by using SageMaker projects.

Model Building Pipelines

It lets you create and manage ML pipelines integrated directly with SageMaker jobs.

ML Lineage Tracing

With SageMaker, you can trace the lineage of ML workflows.

Data Wrangling

SageMaker lets you integrate Data Wrangler into your ML workflows. This helps in simplifying and formalizing data pre-processing and feature engineering using little to no coding. Additionally, you can also add your Python scripts to customize your data preparation workflow.

SageMaker Feature Store

The Feature Store in SageMaker is the go-to place for discovering and utilizing all the different features and associated metadata. You have the ability to create two types of stores: an online store; an offline store.

JumpStart

SageMaker lets you learn about its features and capabilities through curated one-click solutions, example notebooks, and pre-trained models that you can instantly deploy and use, with the ability to fine-tune them as per your requirements.

Amazon Augmented AI

Amazon Augmented AI or A2I eliminates the burden associated with building complicated and large human review systems.

Notebooks

With Amazon SageMaker notebooks, you can make use of AWS’s Single Sign-On (AWS SSO) feature, along with speedy start-up times, and one-click sharing capabilities.

Experiments

SageMaker comes with the ability to reproduce experiments through tracked data or build on experiments through multiple collaborators.

Debugger

SageMaker debugger readily identifies commonly occurring errors during model training and deployment phases.

Autopilot

Autopilot in SageMaker lets users with no ML background knowledge to instantly build classification and regression models (or the likes) with ease.

Model Monitor

SageMaker enables the user to evaluate models in production (endpoints) to identify data drift and variations in model quality.

Batch Transform

SageMaker allows you to reformat datasets, run inference irrespective of having an endpoint or not, and compare inputs with inferences to support predictive analysis.

Why SageMaker for an ML Model?

There are many merits to using SageMaker for ML and AI.

First, it lets you quickly and easily build ML models and directly deploy them into a production-ready hosted environment. Meaning, there is no setup overhead!

And if that wasn’t cool enough, SageMaker lets you access all your data sources from anywhere since it comes with an integrated Jupyter notebook instance.

In addition to that, it also provides many off-the-shelf ML algorithms that are optimized to run efficiently against extremely large data in a distributed environment.

And finally, SageMaker allows you to select from a wide array of model training options that best suits your specific workflows. It takes only a few clicks to launch your model from SageMaker Studio or the SageMaker console and deploy it to a secure endpoint.

A. Train an ML Model in SageMaker

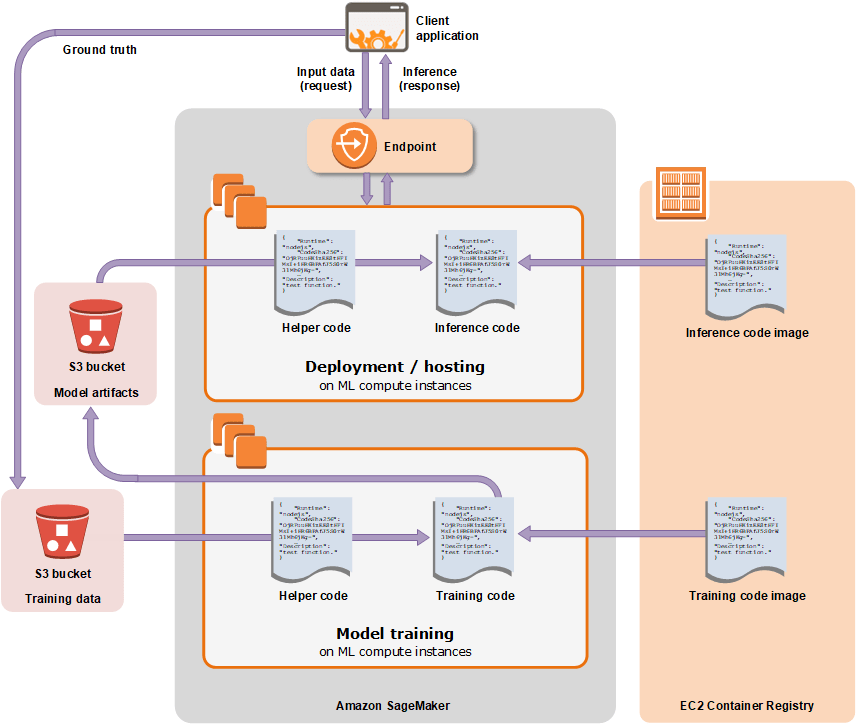

The training and deployment of a model with Amazon SageMaker proceed in the following manner:

Source: Amazon.com

Use Case

In this tutorial, we’ll be building a simple ML model to predict whether a customer will enrol for a certificate of deposit (CD). We will be training our model on the Bank Marketing Data Set that contains information on customer demographics, responses to marketing events, and external factors.

Let’s get to the steps for building and training an ML model using SageMaker:

1. Open SageMaker Studio from Amazon Console

Requisite alert: You must have an AWS account to complete this tutorial. If you do not already have an account, sign up for AWS and create a new account.

Once you have logged into your AWS account, select SageMaker Studio from the AWS console.

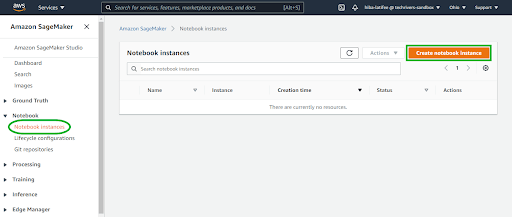

2. Create a SageMaker Notebook Instance

Once you are in the Studio, you will now create the notebook instance that you can use to download and process your data.

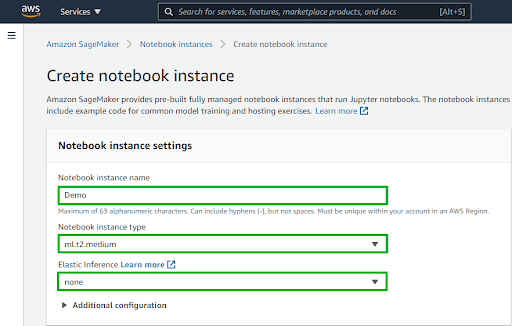

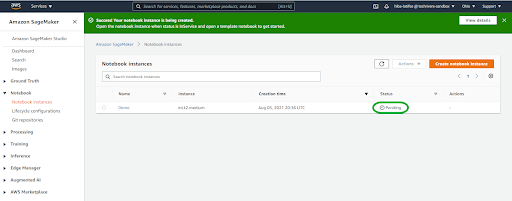

In the left navigation pane, choose Notebook instances, then choose Create notebook instance, as shown above.

On the Create notebook instance page, like the one shown above, in the Notebook instance settings section, fill the following fields as follows:

- For Notebook instance name, type any name of your choice. For this tutorial, we will type Demo.

- For Notebook instance type, choose ml.t2.medium.

- For Elastic inference, keep the default selection of none.

3. Setting up Data Sources, IAM Roles and Data Permissions

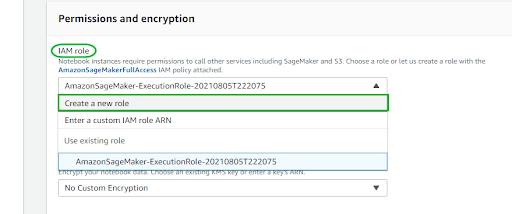

In order to access data in Amazon S3, you have to create an Identity and Access Management (IAM) role.

On the same Create notebook instance page, in the Permissions and encryption section, for IAM role, as shown above, choose Create a new role from the drop-down menu.

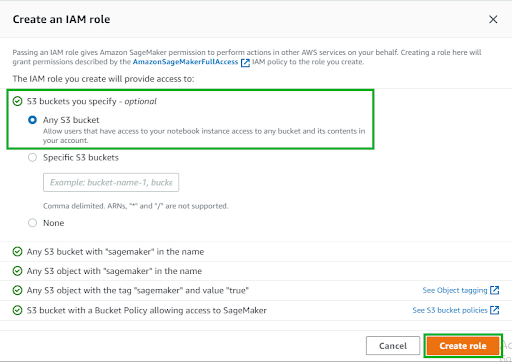

In the Create an IAM role dialog box, choose Any S3 bucket and hit Create role. Alternatively, in order to use an existing bucket of your choice, choose Specific S3 buckets and specify the bucket name.

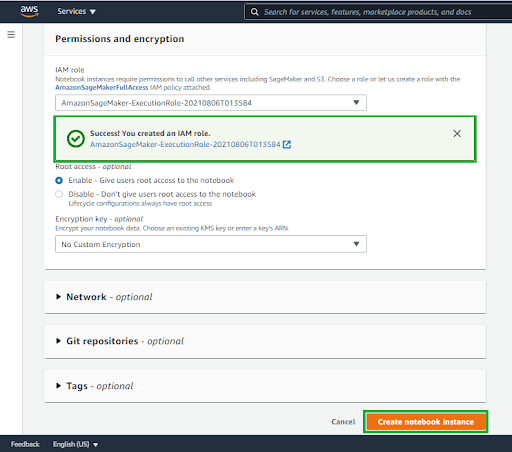

Upon doing that, Amazon SageMaker creates an AmazonSageMaker-ExecutionRole-*** role for you, as shown above. Keep the default settings for the remaining options and hit Create notebook instance.

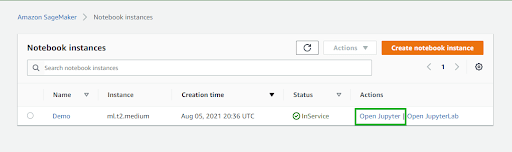

Back in the Notebook instances, the new Demo notebook instance will be displayed with a Status of Pending. The notebook is ready to be used once the Status changes to InService. When that happens, we will proceed to prepare the data and import important libraries into our newly created notebook instance.

4. Prepare Data and Import Libraries

In this step, we will use our Amazon SageMaker notebook instance Demo to preprocess the data that we need to train our ML model on and then upload the data to Amazon S3.

After your Demo notebook instance Status changes to InService, choose Open Jupyter.

After your Demo notebook instance Status changes to InService, choose Open Jupyter.

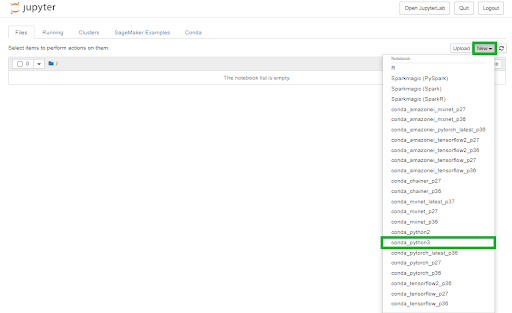

Upon opening your notebook instance, you are provisioned with the Jupyter notebook, as shown above. Click New from the drop-down menu on the upper left corner and then select conda_python3.

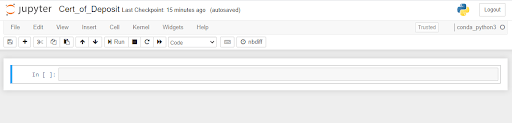

Now, this will open a web-based Jupyter IDE that contains live coding cells for Python programming, as shown above.

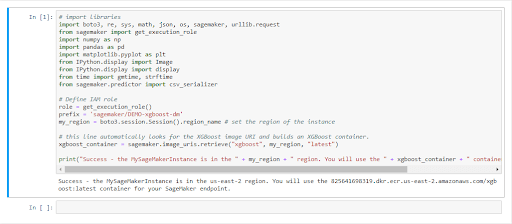

The code below, that goes into a new code cell, imports the required libraries and sets up the environment variables needed to prepare the data, train the model, and deploy it. After typing it into the code cell, choose Run. You will be displayed the output of this code block (as shown in the image below) instantly.

# import libraries import boto3, re, sys, math, json, os, sagemaker, |

The output of the code block would be as follows:

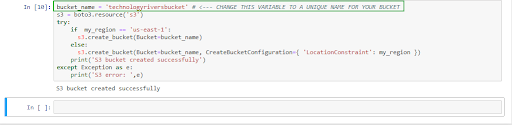

Next, you will create the S3 bucket to store your data, using the code snippet below. You must make sure to replace the bucket_name your-s3-bucket-name with a unique S3 bucket name. Note: you would need to come up with a unique bucket name to receive a success message after running the code.

bucket_name = 'your-s3-bucket-name' # <--- CHANGE |

The output of the code would be as follows:

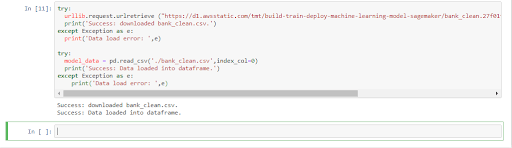

Next, you will download the data to your SageMaker instance and load the data into a dataframe using the code snippet below. Choose Run.

try: urllib.request.urlretrieve |

The output of the code would be as follows:

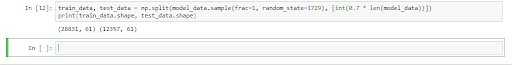

Next, we will shuffle and divide the data into training data and test data.

The training data, which comprises 70% of customers, is going to be utilized during the model training loop. For that, gradient-based optimization is used to iteratively fine-tune the model parameters. Gradient-based optimization is a method to optimize model parameters that reduce the model error, using the gradient of the model loss function.

The test data (remaining 30% of customers) is going to be used to evaluate the performance of the model and measure how well the trained model generalizes to an unseen scenario.

For that, type the following code into the next code cell and hit Run.

train_data, test_data = np.split(model_data.sample |

The output of the above code block would be as follows:

5. Training an ML Model Using Your Training Dataset

For training an ML model using SageMaker, it employs the following 3 steps:

Create a training job

To train a model in SageMaker, you first create a training job. The training job includes the following information:

- The URL of the Amazon S3 bucket where you’ve stored the training data

- The compute resources to be used for training the ML model

- The URL of the S3 bucket the output of the job is to be stored

- The Amazon Elastic Container Registry path is where the training code is stored

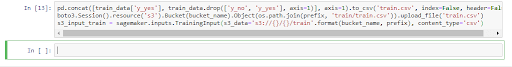

The code block below reformats the header and first column of the training dataset. It then loads the data from the S3 bucket. This is a required step before you can use the SageMaker’s pre-built XGBoost algorithm.

pd.concat([train_data['y_yes'], train_data.drop |

The output:

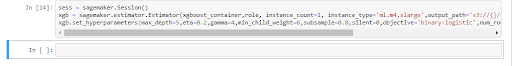

Setting up SageMaker Session

Next, we will set up the Amazon SageMaker session by creating an instance of the XGBoost model (an estimator), and defining the model’s hyperparameters.

Type the following code into a new code cell and choose Run.

sess = sagemaker.Session() xgb = sagemaker.estimator.Estimator(xgboost_container,role, |

The output:

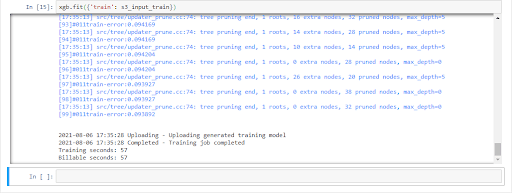

Now, we will start the training job.

The code snippet given below trains the ML model using gradient-based optimization on a ml.m4.xlarge computing instance. After a few minutes, you should see the training logs being generated as an output (as shown below) in your Jupyter notebook.

xgb.fit({'train': s3_input_train}) |

The output of the above code would be as follows:

B. Deploying Your ML Model

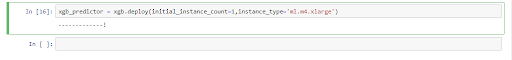

Now that you have a trained ML model, you will deploy it to an endpoint in this step. Additionally, you will reformat and load the data and finally, do a test run of the model for predictions.

The code snippet below deploys the model on a server and creates a SageMaker endpoint that you can access. After typing the code below, choose Run.

xgb_predictor = xgb.deploy |

The output of the above code would take a few minutes and come out as follows:

Now, to predict whether the customers in the test data enrolled for the bank product or not, type the following code into the next code cell and hit Run.

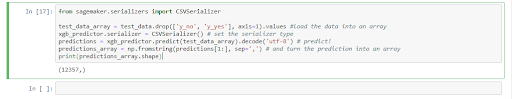

from sagemaker.serializers import CSVSerializer test_data_array = test_data.drop(['y_no', 'y_yes'], axis=1).values |

The output of the above code block comes out as follows:

C. Terminating Resources

Terminating resources that are not actively being used reduces costs and is a recommended practice. Omitting this crucial step would result in charges to your account.

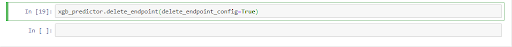

To do that, you will first need to delete your endpoint. For that, in your Jupyter notebook, you will copy the following code and choose Run.

xgb_predictor.delete_endpoint(delete_endpoint_config=True) |

The output:

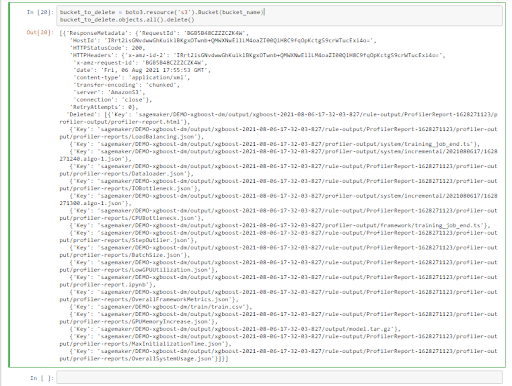

Finally, you will delete your training artifacts and S3 bucket: In your Jupyter notebook, you will type the following code and choose Run.

bucket_to_delete = boto3.resource('s3').Bucket(bucket_name) bucket_to_delete.objects.all().delete() |

The output of the above code will be as follows:

And this culminates our journey of training and deploying an ML model while also making sure we clean up our resources, using Amazon SageMaker.

Key Takeaways

In this digital transformation era, organizations are readily employing ML and AI to quickly identify profitable opportunities and potential risks, using huge amounts of accumulated data.

With the advent of cloud computing and mobile devices, ML modeling has become ubiquitous.

Of the many ML/AI applications out there to build a predictive model, Amazon SageMaker is also one that provides the ability to build, train, and deploy ML models rapidly and efficiently.

After training and deploying an ML model in SageMaker, we can evaluate the performance of our models for variations.

Cleaning up utilized resources on Amazon SageMaker is a recommended practice.

Are you planning to integrate a Machine Learning Model into your growing tech business? We can help you. Just reach out to us and we can discuss.

Did you find this article helpful? We’d love to hear your thoughts. Like, share, or comment on our social on LinkedIn or Facebook.